The team at AltaVista (RIP) invented the CAPTCHA in 1997. At the time, it was a groundbreaking solution to a niche problem: preventing bots from entering URLs into the web search engine.

Fast forward to 2021, and there are more than 20 different CAPTCHA vendors. The biggest of them all, Google’s reCAPTCHA, is used by more than 6.3 million websites.

This leads us to an interesting question: Given that every human on the planet hates them and they aren’t effective at blocking bots, why are CAPTCHAs still a thing?

The Classic “Please select the photos of the traffic light” CAPTCHA

Why do 47% of the websites that use Google’s reCAPTCHA service use the “Pick the traffic lights” version?

reCAPTCHA solutions that require human interaction provide application builders and owners an easy out. They are black box solutions: no config, no decisions, and limited visibility of the impact on humans or the bots that are getting through. A classic see-no-evil, hear-no-evil scenario.

Ultimately, all image-based CAPTCHAs are limited by the human versus bot conundrum: how can you make an image test that is consistently too hard for bots, but easy enough for humans to pass?

This is true, too, of the game-like CAPTCHAs (spin the image, etc) where the puzzle is implied in the visual rather than text. This relies on consistent human interpretation, which can be limited in many different ways.

The rationale that it is cheap, easy, and doesn’t impact performance metrics fails to acknowledge the overall session latency, UX impact, and session abandonment.

I have an idea: Let’s build a new CAPTCHA! ReCAPTCHA v3…

Google, amongst a group of other vendors, launched new and improved CAPTCHAs!

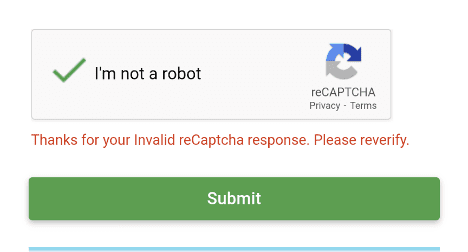

Google’s latest (paid) version acknowledges user frustration; however, it now requires the application owners to create and manage the risk scores that differentiate humans and bots.

Google’s reCAPTCHA v3 results in less human impact, but the bots now have a security control that they can evade. Understanding and limiting the differences between headless chromium versus chrome is a (dark) art that enables bots to obtain the same risk score as humans.

Other variations of “modern” CAPTCHA require users to rotate images and move puzzle pieces. All of these services leverage human interaction to train a data model for bot detection. The consumer is the product, and the currency is time and patience.

A bot builder’s experience

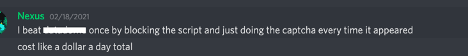

Bot builders operate as niche communities, assisting each other and sharing knowledge. These communities gravitate towards common targets (e.g. sneakerbots) or common open source solutions (e.g. Puppeteer Extra Stealth).

As a bot builder, you have two approaches when attempting to evade a CAPTCHA: 1) be undetectable, or 2) automate the process of solving the CAPTCHA.

The market for CAPTCHA-solving solutions is hot. Services such as 2CAPTCHA ensure that CAPTCHAs present no obstacles to well-funded, semi-technical bot builders. In the case of 2CAPTCHA, they have over 300 reference cases of bots that are using their solution. Here are examples of bots using 2CAPTCHA.

As a bot builder, you can solve your problem for less than $1 per 1,000 solved CAPTCHAs.

So now the cheap and easy security control is frustrating your paying customers, but not the fraudsters…

Equally, applying image processing and automation allows bot builders to automate the solving of game-like CAPTCHAs:

Bot management vendors aren’t helping reduce confusion

Why are security vendors that use CAPTCHAs writing blogs about how bad CAPTCHA is?

A bot mitigation solution is built around four main components:

- Client-side detection: Inspecting the application that generates a request

- Server-side analysis/ actions: Predetermined indicators of automation

- Data/ behavioural analysis: Static and/or AI-driven analysis

- Mitigating actions: Block, redirect, resource consumption, CAPTCHA

The application of machine learning (ML) models to bot detection is typically non-deterministic and prone to false positives. ML is typically applied out of band, and CAPTCHA is used to allow any humans back through that accidentally got through. This isn’t taking ownership of the difficult product design decisions that need to be made. You end up with a mousetrap that catches humans every so often, yet it doesn’t catch the sophisticated bots.

Am I a mouse?

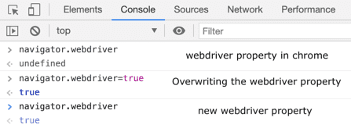

A well-known example is the navigator.webdriver object within a browser. The webdriver read-only property of the navigator interface indicates whether the user agent is controlled by automation. It is an often overwritten property of the navigator object that provides a clear signal of browser automation.

So we ran the following super hacky test against the same vendor that nexus was targeting:

Step 1: Identify website protected by vendor and load in Chrome

Step 2: Open a console in Chrome, and overwrite webdriver property as page loads

Step 3: If vendor serves up a CAPTCHA, solve it whilst maintaining navigator.webdriver=true

TL;DR – Vendor ignores an unambiguous signal of browser automation, serves CAPTCHA, and then allows us to access the website.

This makes no sense. We have passed the vendor the clearest possible signal that we are automating our browser but their product is not configured to make decisions. The CAPTCHA solution allows them to claim an outcome without conviction in their in-product decision making. “We don’t actually know if you are a bot, so please help up by rotating this image” and the like.

Given the prevalence of CAPTCHA solving solutions on the market, this raises several questions as to the efficacy of these solutions.

So, why haven’t we killed the CAPTCHA?

The rationale for using CAPTCHA solutions appears to be similar between security vendors and application owners – it’s a decision avoidance solution.

There is no doubt that differentiating between humans and bots can be challenging. Whether you deploy a self-managed CAPTCHA solution or outsource to a security vendor that uses CAPTCHA, the same issues exist for application owners. You are taxing potential customers and risking lost sales, but not really impacting bot builders.

Simple is good, but it needs to be effective

At Kasada, the core pillars of our product philosophy are simplicity, innovation, and long-term efficacy. We firmly believe that modern security solutions need to deliver simple, easy-to-integrate, and easy-to-manage outcomes.

This is where most blogs by bot mitigation providers say that CAPTCHAs have their place and should be implemented as part of a defense-in-depth approach. While we strongly believe that defense-in-depth is important, Kasada’s solution allows you to defend against malicious automation without relying on your paying customers to validate that they are indeed human without a CAPTCHA. Our solution couples the ability to detect automation with a mitigation solution that controls and eradicates bot activity.

“For us, it’s absolutely critical to not have any visible customer flow interventions, be it things like reCAPTCHAs or challenge pages, or anything like that. In the entertainment industry in general, and in betting, customers literally would abandon a registration process purely just because they have one extra step or five seconds to wait for a challenge page to disappear.”

— Nik Pinchuk, Global Head of Engineering, PointsBet

To learn more about how Kasada allows customers to ditch the CAPTCHA for good schedule your customized free threat briefing.