Bad Bots Disguise as Humans to Bypass Detection

Bot mitigation providers place significant emphasis on stopping bots with the highest degree of accuracy. After all, it only takes a small number of bad bots to get through your defenses to wreak havoc on your online businesses. One challenge of stopping bad bots is keeping false positives to a minimum (where a human is incorrectly categorized as a bot).

The more aggressively rules are tuned within a bot mitigation solution, the more susceptible the solution becomes to false positives because it needs to decide whether to grant requests for indeterminate risk scores. As a result, real users are inadvertently blocked from websites and/or being served CAPTCHAs to validate they are indeed humans. This inevitably creates a poor user experience and lowers online conversions.

Much of the ongoing innovation in modern bot mitigation solutions has been a reaction to increasing sophistication of the adversary. The fact that bad bots increasingly look like humans and act like humans in an attempt to evade detection makes it more difficult to rely on rules, behaviors, and risk scores for decisioning – making false positives more pronounced.

Humans Now Disguising Themselves for Privacy

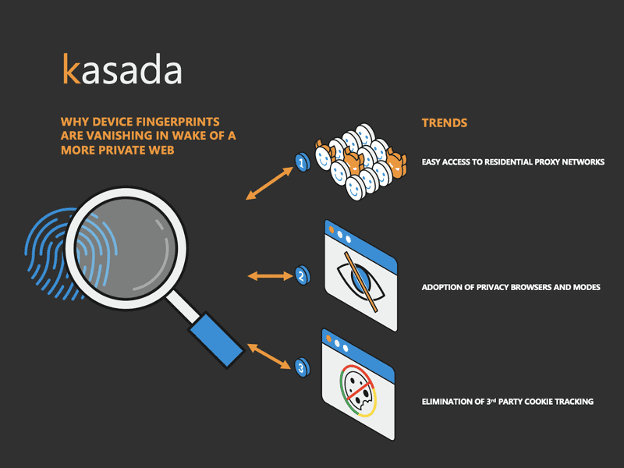

A more recent trend is exacerbating false positives, and without proper innovation, it renders legacy rule and risk-score dependent bot mitigation solutions inadequate. It results from the accelerating trends related to humans taking action towards more privacy on the Internet. Ironically, the move towards more privacy on the web can actually compromise security by making it even more difficult to distinguish between humans and bots.

To understand why it’s essential to know how the majority of bot detection techniques work. They rely heavily on device fingerprinting to analyze device attributes and bad behavior. Device fingerprinting is performed client-side and collects information such as IP address, user agent header, advanced device attributes (e.g. hardware imperfections), and cookie identifiers. Over the years, the information collected from the device fingerprint has become a major determinant for analytics engines used to whether the request is bot or human.

Device fingerprints, in theory, are supposed to be like real fingerprints. Whereby its fingerprint can uniquely identify every user. Fingerprinting technology has evolved towards this goal – aka hi-def device fingerprinting – by collecting the increasing abundance of information client-side. But what happens when the device fingerprint can’t be a reliable unique identifier — or even worse — starts to look just like those presented by bad bots?

The Vanishing Device Fingerprint

Previously, we’ve posted on how bot operators are evading device fingerprint based detections. They harvest digital fingerprints and use them in combination with anti-detect browsers to trick systems into thinking the request is legitimate. This is one of the early drivers that caused Kasada to shift away from device fingerprinting years ago as an effective means to distinguish between humans and bots.

In addition to this, here are several recent trends regarding web privacy that are making the “evidence” gained through device fingerprinting methods even more suspect.

Trend #1 – Use of Residential Proxy Networks

Sure, residential proxy networks are leveraged by bot operators to hide their fraudulent activities behind seemingly innocuous IP addresses. But there’s also an increasing trend of legitimate users extending beyond traditional data-center proxies to hide behind such residential proxy networks like BrightData. Residential proxy networks have become increasingly inexpensive, and in some cases, free; they provide a seemingly endless combination of IP addresses and user agents to mask your activity.

While some of these users hide behind residential proxies for suspect reasons, such as to overcome access to restricted content (e.g. geographic restrictions), many use it to genuinely ensure their privacy online and protect personal data from being stolen. Fingerprinting techniques used to detect those behind proxy networks have become ineffective in light of modern residential proxy networks that mask your identity.

Conclusion: you can’t rely on IP addresses and user agents to distinguish between humans and bad bots as they look the same when hidden behind residential proxies.

Trend #2 – Use of Privacy Mode & Browsers

The recent surge in availability and adoption of private mode and new privacy browsers also makes it difficult to rely on device fingerprinting.

Private browsing modes, such as Chrome Incognito Mode and Edge InPrivate Browsing, reduce the density of information stored about you. These modes take moderate measures to protect your privacy. For example, when you use private browsing, your browser will no longer store your viewing history, cookies accepted, forms completed, etc. once your session is completed. It is estimated that more than 46% of Americans have used a private browsing mode within their browser of choice.

Furthermore, privacy browsers, along the lines of Brave, Tor, Yandex, Opera, and customized Firefox take privacy on the web to the next level. They add additional layers of privacy such as blocking or randomizing device fingerprinting, offer tracking protection (coupled with privacy search engines such as DuckDuckGo to avoid tracking your search history), and delete cookies making ad trackers ineffective.

These privacy browsers command about 10% of the total market share today, and they are increasing in popularity. They have enough market share to present major challenges for anti-bot detection solutions reliant on device fingerprinting.

Conclusion: you can’t rely on advanced device identifiers or first-party cookies due to the increasing percentage of users leveraging privacy modes and browsers.

Trend #3 – Elimination of 3rd Party Cookie Tracking

There will always be a substantial percentage of Internet users who don’t use privacy modes or browsers. Google and Microsoft have too much market share. But even for these users, device fingerprinting will be increasingly difficult. One example is due to the widely publicized effort by Google to eliminate 3rd party cookie tracking. And while the timeframe has recently been delayed to 2023, this will inevitably make it more difficult to identify suspect behavior.

3rd party cookies collected from the device fingerprinting process are often used as a tell sign of bot-driven automation. For example, if a particular session with an identified set of 3rd party cookies has tried to do 100 logins, then it’s an indicator that you’ll want to force them to revalidate and establish a new session.

Conclusion: you soon won’t be able to use 3rd party cookie identifiers within the browser to help identify bot-driven automation.

Moving Beyond Device Fingerprinting

Kasada moved away from device fingerprinting years ago, realizing the increasing limitations of obtaining accurate device fingerprints from both humans and bots. The team has pioneered a new method that doesn’t need to look for a unique identifier that can be associated as a human, but rather looks for the tell-tale, indisputable evidence of automation that presents itself whenever a bot interacts with websites, mobile apps, and APIs.

We call this our client interrogation process. Attributes are invisibly collected from the client that look for the indicators of automation including use of headless browsers and automation frameworks such as Puppeteer and Playwright. So instead of asking the question as to whether a request can be uniquely identified as a human, Kasada asks whether any request presenting itself is in the context of a legitimate browser.

With this approach, there is no need to collect device fingerprints and no ambiguity by having to assign rules and risk scores to decide bot or human. Decisions are made on the first request, without ever letting requests into your infrastructure, including those from new bots never seen before – without ever needing CAPTCHAs to validate.

If you are already using a bot mitigation solution, ask them about their reliance on outdated device fingerprinting methods and what measures are being taken to address the inevitable increase in false positives and undetected bots resulting from the movement towards a more private web.

Want to see Kasada in action? Request a demo and observe the industry’s most accurate bot detection and lowest false positive rate. You can also run an instant test to see if your website can detect modern bots, including those leveraging open source Puppeteer Stealth and Playwright automation frameworks.